How to Develop a Test Blueprint - and Specifically, a Good One

In this blog, we provide you a comprehensive guide to why a test is made, what distinguish a good test, and how to develop a test in detail.

Share

Developing an employment test, whether it is a personality, behavior, job-fit, or cognitive assessment, is a time-consuming process. There are many things and factors that need to be considered, let alone it involves various experts to ensure the test measures what it needs to measure and yields reliable results.

The article acts as a comprehensive guide to why a test is made, what the characteristics of a good test are, and the test development process in detail. We will be covering the purpose of assessment, traits of a good test, and the first part of the test development process i.e. specifying the test objective.

What is the purpose of assessments?

Assessment of skills or knowledge is as important as the teaching/learning of the skill or knowledge. Assessment or testing or knowledge evaluation is not a new concept, and we have all at some point taken pre-instructional assessment tests (e.g. Central examinations for admissions into college), interim mastery test (mid-course assessments to check progress), and mastery tests (end course evaluation). We have also often asked ourselves and academicians ‘what is the purpose of these tests?’

Herman, Aschbacher, and Winters (1992) point out that “People [students] perform better when they know the goal, see models, [and] know how their performance compares to the standard.” The basic purpose of all tests, regardless of the way in which the test is used and the outcome associated with the test, is discrimination i.e. to distinguish the level of aptitude, abilities, skills among the test takers. For professional (employment) and academic interest, the objective would include such discriminators as proficiency, analytical and reasoning skills, technical aptitude, and behavioral traits, among many others.

The two most popular approaches to facilitate this discrimination process are the norm-referenced approach, which is commonly understood as the percentile system wherein the relative performance of test-takers is considered, and the criterion-referenced approach wherein the performance of test-takers is assessed against a pre-determined benchmark.

What makes a test a good test?

- Variance in scores: The goal of discrimination is achieved only if there is sufficient variance in the scores of the test takers. A test that is too tough would result in all test takers scoring low marks while one that is too easy will lead to overall high scores thereby not highlighting any discrimination on any of the criteria and thus neither test is considered good.

- Reliability: Reliability is a measure of a test’s consistency—both over a period of time as well as internal consistency. It measures the precision of test scores or the extent of measurement error in the test.

- Validity: Validity is an indicator of how well an assessment is measuring what it is supposed to measure. In other words, it measures a test’s usefulness.

- Truth in testing/integrity: A good test has integrity and transparency built into it at multiple stages.

- While the test is being developed, it should be reviewed by a number of experts minimize developer bias. Once the test is developed, it is reviewed on the basis of its content and scoring.

- Post completion of the test, scores are released to all test takers and due process is defined for any test taker accused of cheating or other irregularities.

- Standardization: This is the process of benchmarking by conducting the test on a representative population to see the scores that are typically obtained. This helps in setting benchmarks and enables a test taker to assess his/her score by comparing it to the standard scores.

- Leak-proof: Tests are fraught with the possibility of questions being leaked and accessed by those who have not yet taken the test thereby resulting in an unfair advantage to some. A question bank usually 20-25 times a test is developed which ensures that the frequency of a particular question is contained. Adaptive tests also ensure that no 2 test papers are alike as the questions administered to a candidate depend on his/her aptitude and skill.

While the test is being developed, it should be reviewed by a number of experts to minimize developer bias.

But how are tests designed?

A test is made up of various individual questions, so it follows naturally that for the test to be good, each individual question also needs to be good. Each test question must meet three requirements for it to be classified as a good question.

- First, the question must evaluate an important aspect, an area that is crucial for the test taker to be good at in order to succeed in the outcome associated with the assessment e.g. job, further studies, etc. However, question quality is not solely governed by the aspect it covers.

- Second, it is also essential that the questions are well structured, avoiding flaws that benefit the test examinee and are of appropriate difficulty levels.

- And third, the question should rate high on both subjective and objective evaluations and adhere to standards.

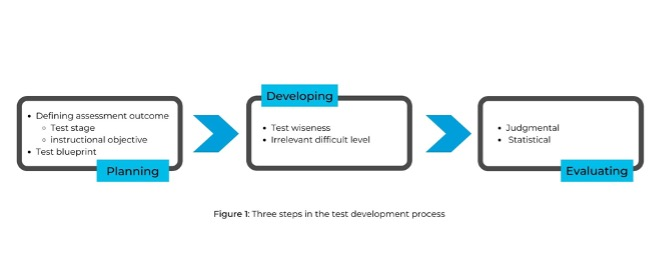

Figure 1: Three steps in the test development process

Specifying the objective

The primary step in developing a test is the definition of the outcome or objective which would be used as the basis for discriminating between test-takers. This is done by, at the outset, defining the stage at which the test would be administered i.e.

- Pre-instructional: Tend to cover a broad range of skills and topics

- Interim Mastery: Tend to be brief and specific

- Mastery: Tend to be infrequent but very critical, having long term implications

To illustrate, employment tests would be categorized as pre-instructional tests if the purpose is to assess the trainability of the workforce and would be classified as mastery tests if they are being used to shortlist candidates on the basis of their skills/knowledge.

Understanding the instructional objective is also crucial in order to arrive at the testing objective, which would involve researching the curriculum and teaching methodology, to be able to draw insights from what the students are learning and the purpose of the instruction (fundamental concepts, advanced concepts, vocational training, etc.)

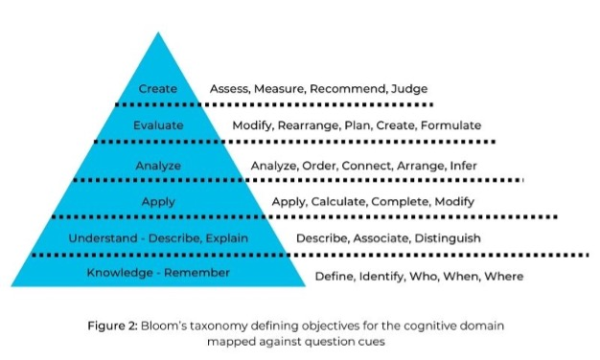

This would lead to defining of objective or outcome of the assessment. Figure 1 showcases one of the most widely accepted lists of learning objectives as given by Bloom (in the triangle) and question cues for each of these learning objectives as generally accepted (on the right).

Figure 2: Bloom’s taxonomy defining objectives for the cognitive domain mapped against question cues

Categorization of learning objectives is done by an independent panel of experts. A panel of at least 2 subject matter experts works on the categorization independently and the classification is thereafter compared. If the classifications differ on more than two counts, additional experts are referred to in order for a consensus to be arrived at.

Once the learning objectives have been defined, the next task is to formulate a test blueprint that specifies domain area-wise learning outcomes and enlists the skills that need to be tested for each domain along with the relative importance of each. Figure 2 gives a sample test blueprint for the Accounting domain with only three learning objectives.

- Area

- Knowledge

- Understanding

- Application

- Accounting Principles 122 Matching Balance Sheet 11 Accrual Accounting 12

Essentially a test blueprint serves as a ready reckoner for the following:

- Determining the total number of questions to be developed with domain level break-up

- Cognitive level breakup

- Category weightage is in keeping with importance given during the instructional phase

Developing a test is a complicated process, as it involves many layers of planning, testing, and evaluating. For 40 years, SHL’s team of experts has tirelessly develop our assessments so that we can help you build a workforce with the skills, motivations, and talent you need.

Book a demo with one of our experts so we can help you assess and hire the best talent for your teams!

Originally Published April 16, 2019, by Aspiring Minds, now SHL.