How Does SHL Build and Deliver Responsible AI-based Hiring Solutions?

Navigating the complexities and considerations before implementing responsible AI solutions for hiring.

Share

In the knowledge-driven economy, effective hiring stands as a critical factor for organizational growth and industry impact. Embracing the potential of AI-powered assessments, companies strive to evaluate candidates at scale. However, amid all the hype around super-efficient AI-based solutions, a stark reality emerges—over time, these systems may experience performance decay and give rise to algorithmic biases.

This blog sheds light on the potential risks associated with deploying AI solutions, offering invaluable considerations for companies before implementation. Furthermore, it unveils SHL's innovative solutions that effectively address these challenges, paving the way for successful talent assessment through AI.

What causes the degradation of an AI model used for hiring?

It is believed that “Your AI models are as good as the data they were trained on."

The root cause of performance degradation of an AI model used for hiring is that real-world hiring data is constantly changing, while the model architecture and parameters remain fixed and can become outdated over time. This conflict leads to a decline in performance.

This can happen in multiple ways:

- Shifting applicant demographics

Models trained on even the best historical data experience performance degradation over time if there are changes in the candidate population. It is crucial to recognize that demographic shifts can significantly impact the talent pool.

For example, we see more women applying for roles in the historically male-dominated technology field. A rigid AI model, trained primarily on male candidates, would be unable to accurately evaluate skilled female applicants. Algorithms trained in countries with high college graduation rates may fail to recognize equally qualified candidates without degrees from areas with less access to higher education. - Rapid advances in business practices and technologies

In today's job market, the skills and qualities needed for different roles are changing rapidly. What used to be important qualifications for a job may now be outdated.

For example: AI-powered video interviews and simulations have the potential to modernize sales assessments, but many current models prioritize traditional traits like assertiveness and persistence over emerging skills like data analysis and CRM expertise. This can lead to candidates with strong communication skills receiving high scores, even if they lack the necessary technical abilities. Consequently, qualified applicants with modern skill sets may be overlooked due to a misalignment with the traits the AI is optimized to evaluate. - Production-training divergence

Assumptions in controlled training environments differ from real-world conditions, leading to a mismatch in AI hiring model performance. Biases can arise from small sample sizes and lack of uniform representation, affecting the effectiveness of AI hiring models.

For example, AI models often struggle to adapt to real-world resumes that have gaps, overlapping roles, or unconventional formats, such as white fonts. To make accurate candidate recommendations, AI systems need constant re-training and adaptation to evolving dynamics. Anticipating and mitigating model degradation should be a core aspect of any hiring organization's AI strategy.

SHL Labs’ secret recipe for making responsible AI systems in hiring

Within SHL Labs, we have developed a robust AI governance framework that draws upon the industry's leading AI frameworks by the Society of Industrial and Organizational Psychology (SIOP) and the World Economic Forum. Our framework aims to ensure the continual quality of our AI models as the underlying data and conditions evolve. We collaborate closely with our clients to responsibly govern AI throughout its lifecycle, from data collection to ongoing monitoring of deployed models. We believe maintaining model quality over time is both a technological and moral imperative.

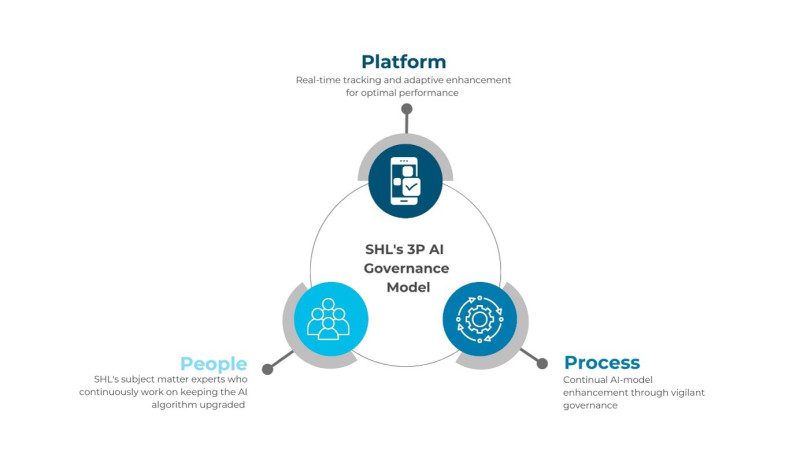

Our framework, based on the 3 Ps principle -Platform- People- Process-, delivers AI systems that are effective from day one and adapt responsibly to changing conditions.

Platforms

We extensively use MLOps (Machine Learning Operations) platforms that allow continuous tracking and improvement of our clients’ AI model quality.

We utilize performance dashboards with real-time metrics to monitor AI model quality in recruitment, including accuracy, drift, adverse impact, and other key indicators. Statistical tests like the Kolmogorov-Smirnov test quantify the drift between model score distributions over time. We track feature relevance to detect early signs of drift or degradation in the model, analyzing how various factors change over time and measuring their relationships through statistical tests. This helps us identify cases where previously useful patterns change and new unmodeled correlations emerge. We use automated pipelines for continuous model improvement, allowing for easy retraining on the latest real-world data. The refreshed models are then reviewed by our quality assurance team prior to deployment. Our annotation platforms offer optimized interfaces for rating various data types. Distinct highlighting prevents duplication of work, and each training data sample is rated by two independent subject-matter experts, with a third serving as a tie-breaker. These platforms enable proactive governance and ensure that our AI models stay current as the recruiting landscape evolves, delivering continuous improvement to our clients.

People

SHL’s subject matter experts, i.e., AI operations, R&D Engineers, and I/O Psychologists, closely and continuously examine our platform and ensure the model’s quality and accuracy.

- AI Operations A dedicated team upholds data ethics, addressing imbalances in gender, race, and biographical data through targeted sample collection and pre-processing. They employ professional annotators and subject-matter experts to ensure accurate and validated ratings of data subsets.

- R&D Engineers: They develop and improve AI models, creating guidelines for data collection, annotation, and quality assurance. They stay updated with the latest advancements in the field to continuously evolve development and testing methods.

- I/O Psychologists team applies organizational psychology and research methods to understand and adapt to changing employee behaviors, improving the performance of the employees, and communication in the workplace.

Process

To ensure our AI systems adhere to industry best practices and the rigorous quality standards set by psychological bodies such as the British Psychological Society (BPS) and SIOP, we follow a robust development process and have exhaustive ongoing assurance protocols. Development: We leverage the industry best practices created by SIOP and audit our AI systems against the strict quality criteria set by the BPS.

- We take a data-centric approach and intentionally engineer high-quality data sets that are systematically audited to ensure they are representative of the candidate population and minimum of bias.

- To ensure our AI systems are aligned with human decision-making we use psychologist expert raters to create behaviorally anchored rating scales prior to human ratings to ensure more objectivity in model creation.

- All expert human ratings are audited against the strict criteria set by psychological standards bodies to ensure accuracy and objectivity.

- AI systems undergo a thorough audit before deployment to align with expert ratings and significantly reduce systematic bias, with the process documented in technical manuals.

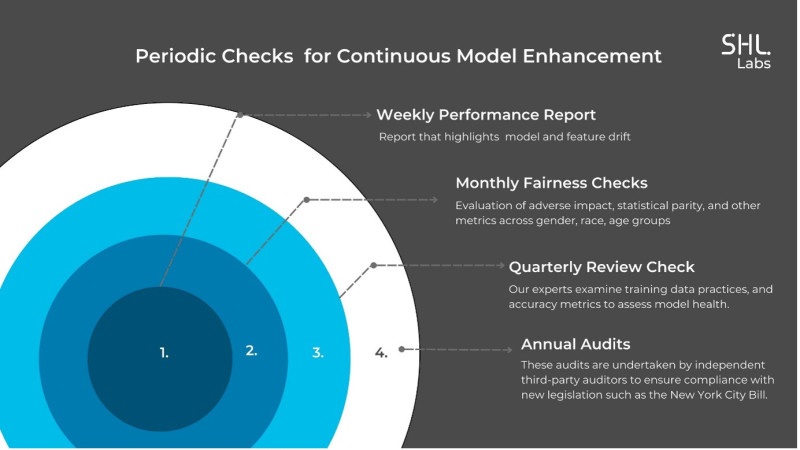

Ongoing Assurance: We implement rigorous governance protocols on both ongoing and periodic cycles to enable continuous model enhancement.

- Weekly performance reports that monitor model and feature drift. These automated reports highlight changes that need human intervention.

- Monthly fairness checks across subgroups to proactively uncover biases. We evaluate adverse impact, statistical parity, and other metrics across gender, race, and age groups.

- Quarterly reviews by our R&D engineers and I/O psychologists, where we examine training data practices and accuracy metrics to assess model health.

- Annual bias audits are undertaken by independent third-party auditors to ensure compliance with new legislation such as the New York City Bill.

We collaborate with customers on an annual model refresh, rebuilding, and retraining systems with expanded and updated datasets. The retrained models are thoroughly tested for performance and consistency. Client feedback is actively sought throughout the year to ensure fairness and identify improvement opportunities.

Our 3P AI governance framework, combining advanced platforms, rigorous processes, and people oversight, ensures responsible and optimized AI evolution in the dynamic hiring landscape. We invest in robust data annotation tools and MLOps model monitoring platforms to uphold AI ethics. With quality data ingestion, bias monitoring, responsible model refresh, and transparency, our clients can trust our commitment to meeting today's hiring needs.

Check out our projects at SHL Labs, where our team of experts joins hands to advance innovation in talent acquisition and management.